“Best not analyze too deeply on this one, huh?”

A long time ago I promised a guy named Sam that I would dig up some examples of PowerPivot compression. I then, of course, forgot all about it until today. Sorry Sam.

But today I was playing with a data set on my desktop machine that was really getting me down. The data was provided as a (regular, non-PowerPivot) Excel file with 500K rows in it. And my machine labored to do anything at all with that data – opening it took forever. Deleting a single row took forever. And so on.

But this was the same machine I had used to do a PowerPivot demo with a 100M row data set, which ran with no problem! 0.5% of the data was bogging me down in Excel!

So I decided to do a “before and after” comparison.

Hypothesis

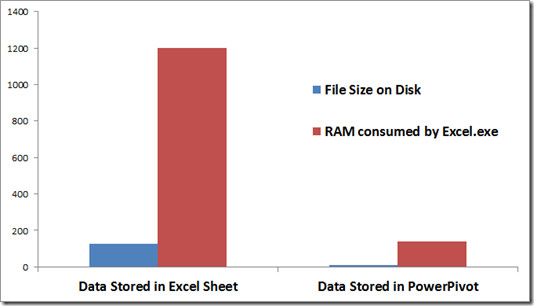

Based on my knowledge of PowerPivot compression, I expected that PowerPivot would do a little bit better than Excel on disk, and a lot better in RAM.

Didn’t quite turn out that way 🙂

Results

|

Data Stored in Excel Sheet |

Data Stored in PowerPivot |

|

| File Size on Disk |

126 MB |

10.2 MB |

| RAM consumed by Excel.exe |

1200 MB |

140 MB |

Wow. 8x better in RAM, 12x better on disk! (I was SO tempted to use a pie chart. Just kidding).

Good thing I ran the experiment twice!

First time I did this, the PowerPivot RAM number was 600 MB. Still 2x better than Excel, but 8x is much better 🙂

Not quite sure what was going on with that 600 MB number. I’d had Excel open for awhile and probably hadn’t closed Excel.exe completely after closing the native Excel file. Probably that was it.

OK, don’t analyze! Rejoice!

Bottom line: 12x better on disk and 8x better in RAM, no matter what, is tremendous. Not to mention the performance of working with the data and how much better that is.

And PowerPivot’s data compression ratio only gets better with larger data sets. 500k rows is big, yes, but remember, this same computer handled 100M, which I obviously can’t even test out in Excel for comparison.

Now that the data is loaded into PowerPivot, I don’t even have to use my desktop machine to work with it. I’m switching over to my laptop. The laptop is even running 32-bit whereas the desktop is 64. But hey, the laptop fits on [link removed due to 404] my new treadmill desk 🙂

And my waistline could use some compression.